We develop apps your customers love to tap

Want an app that makes a real difference to your customer engagement? You’ve come to the right team. Our superskilled iOS and Android developers build apps that are user friendly, are within budget and will create quality engagement with your customers. In short: apps your customers love to tap.

Get in touch

Why you can

count on us to

develop great apps

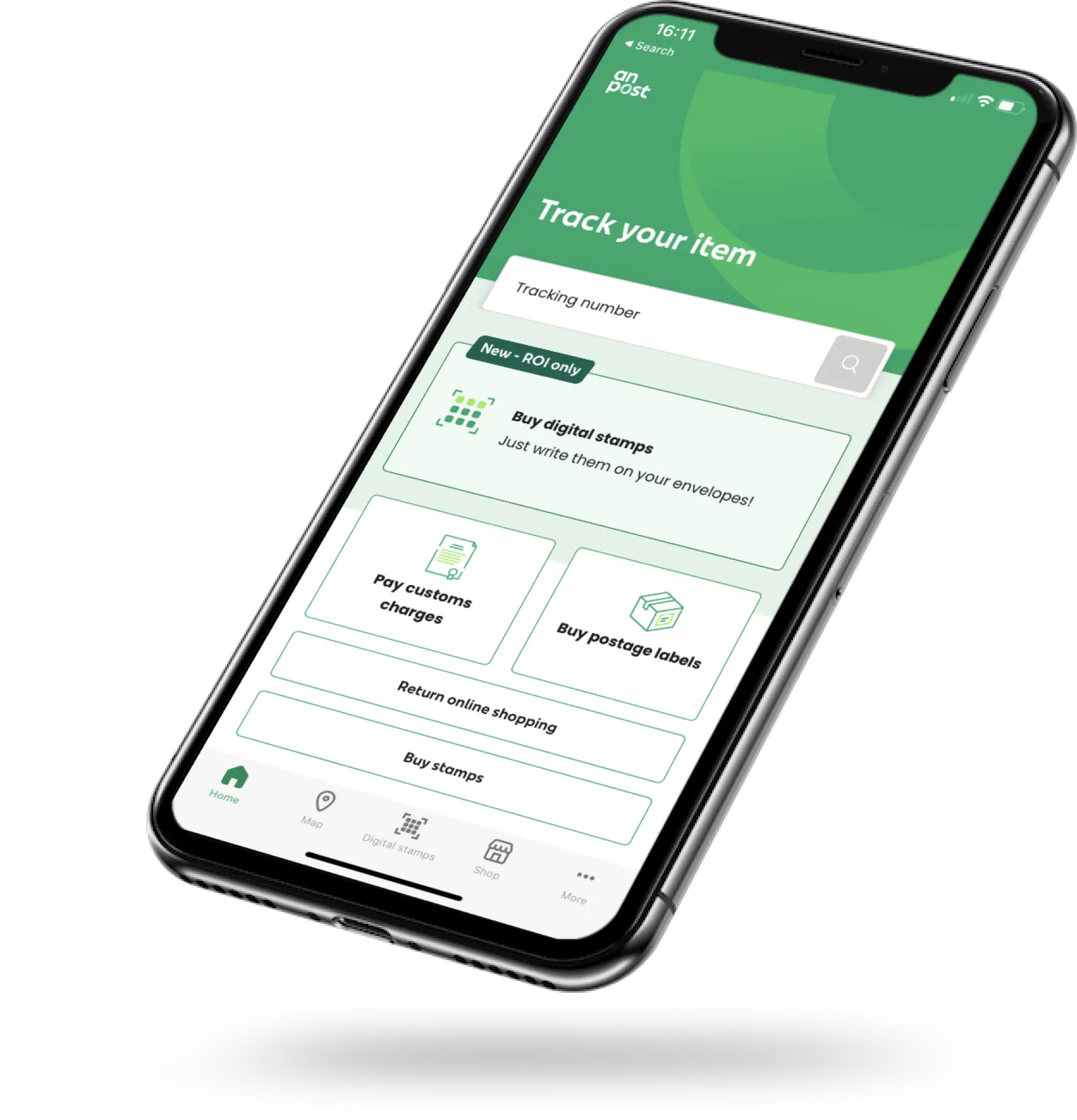

We make apps your customers want

We have the skillset and expertise to make apps that are user-friendly, convenient and make practical differences in your customers’ everyday lives.

We develop apps, and nothing but

Our teams of developers are all about building apps and nothing else, so unlike our competitors we’ll bring a single-minded focus to the task of building engaging apps, on time and within budget.

We develop apps our clients love

Our iOS and Android developers have a long and successful track record in making effective apps that have transformed their clients’ customer engagement

Read more

Your long-term App partner

Just like you want to build a lasting relationship with your customers, we like to build a lasting partnership with our clients. That way, once we’ve developed your app, we can optimise your app’s performance and update it with the latest tech and functionality - ever improving how you best serve your customer needs.

Get in touch

The benefits of building an app

There are many reasons why your company may need to develop an app - and these include:

We make apps your customers want

Building an app helps your customer better manage tasks and interactions with your business.

Finding more innovative ways to interact

Apps easily integrate the latest tech and trends, leading to even more innovative ways to serve your customers’ needs.

Allowing your customers to self-serve

Improves customer experience, decreases calls to your helplines and frees up staff for more effective tasks.

Keeping customer data more secure

You can collect and store your customer data in a more secure way, as well as better protect their privacy.

Collecting & improving customer data

They can be a great way to understand your customers needs and deliver long-term value to them in the way they want it.

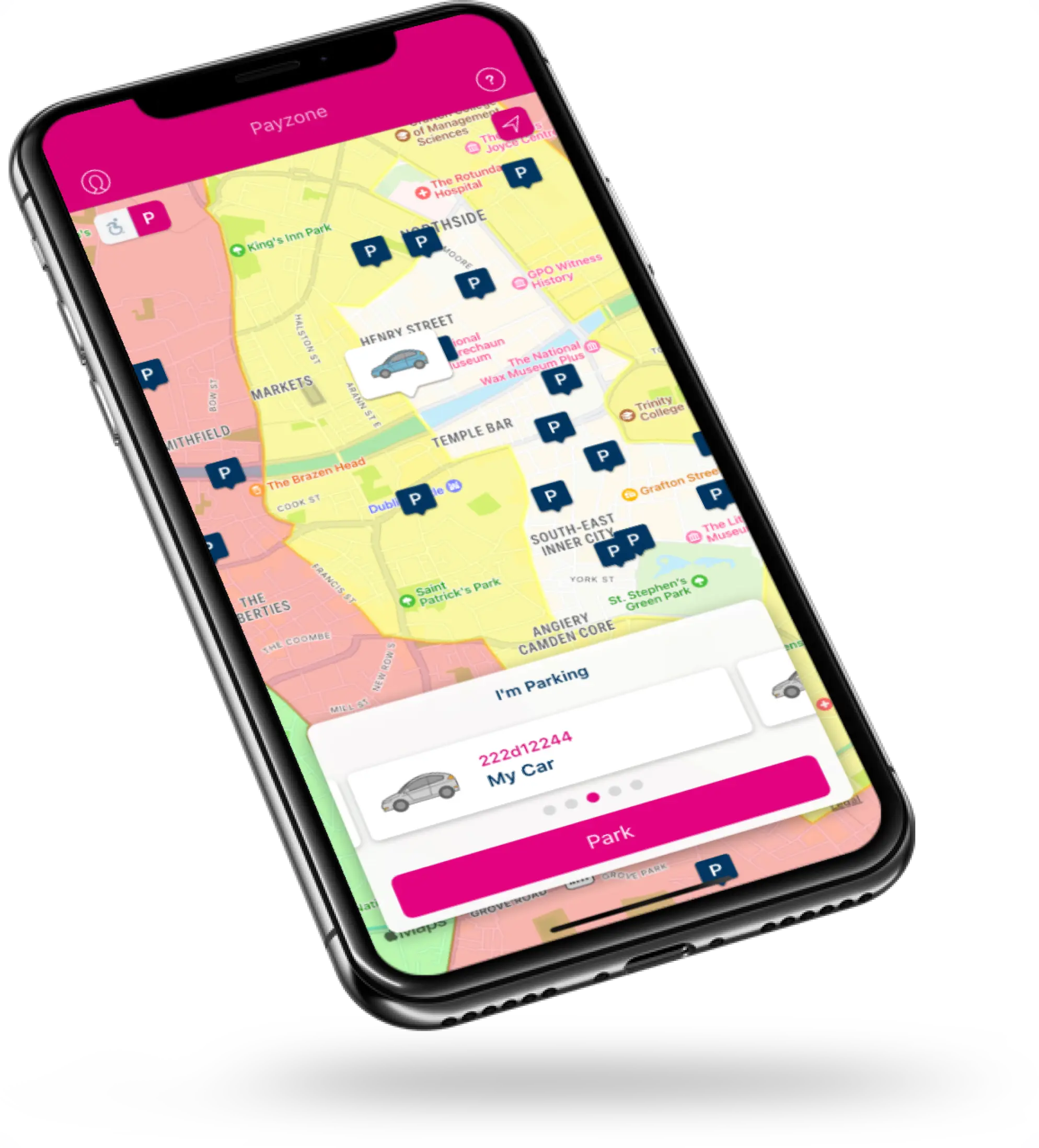

We engaged with Tapadoo on the Parking Tag project over six years ago. They brought exceptional technical acumen and professionalism in building and deploying a very successful app. The Payzone team have an excellent relationship with Tapadoo and together we have continued to add various features to the app that have significantly contributed to the product’s overall success.

Michelle Hughes

Business Partner Manager, PayzoneWant to build an app your customers will love?

Get in touch